Archive: 2023

Learning more about Teaching AI

Comments Off on Learning more about Teaching AIBy Joe Tise, PhD, Senior Education Researcher, IACE

Driving into the heart of Washington, D.C. is a unique experience. Mixed with thousands of business people, sight-seers, and the occasional politician shuffling to and fro, is the sense of optimism for what could be. Every significant social, policy, or and/or economic movement that had national—and often international—influence went through our nation’s capital.

As I arrived at Teaching Inclusive AI in Computer Science event co-hosted by the National Economic Council and U.S. National Science Foundation (NSF) (and organized by CSTA) on the White House grounds, I wondered how the CS education landscape would look 10 years from now, and how the presenters and attendees would prove pivotal in shaping its form. It was clear by the end of the event that everyone there shared two core characteristics: a deep passion for CS education and an unwavering optimism for the future.

The event kicked off with speeches from several representatives from members of the Biden-Harris Administration (e.g., Chirag Parikh [Deputy Assistant to the President and Executive Secretary, National Space Council], Ami Fields-Meyer [Senior Policy Advisor, Office of the Vice President], and Seeyew Mo [Assistant National Cyber Director, Office of the National Cyber Director]). Each emphasized the importance of a CS and AI-literate citizenry and further discussed how the Biden-Harris administration plans to support CS, AI, and AI in computer science education. One of the highlighted efforts was an executive order signed by President Biden targeting safe and trustworthy AI development.

To make the policy discussion more concrete, we next heard from a panel of four CS teachers from across the country who represented both middle and high school level CS. They discussed how they have seen CS, and particularly AI, influence many subjects in school beyond standalone CS courses. One teacher pointed out that their school district adopted a total ban on generative AI tools in an attempt to prevent academic misconduct. The teachers agreed that while the district’s motivation may be noble, the ban would likely disadvantage the students in the long run because they would not have the opportunity to learn how generative AI works—and more importantly, learn about its limitations. The panel discussion ended by acknowledging the continued struggle to recruit and retain CS teachers at both middle and high school levels.

Finally, the plethora of work remaining requires funding. To this point, Margaret Martonosi (NSF Chief Operating Officer) and Erwin Gianchandani (Assistant Director of the CISE directorate) discussed how NSF as a whole, and particularly the CISE directorate, is prioritizing CS education research, with reference to the recent Dear Colleague: Advancing education for the future AI workforce (EducateAI) letter released.

Suffice to say I left the White House grounds even more inspired and hopeful for the future of CS education—and our nation as a whole. We have the vision, we have the motivation, and the groundwork is laid. Now we need to act. You can read a summary readout of the event on the White House website here.

Constructivism and Sociocultural

Comments Off on Constructivism and SocioculturalBehaviorism highlighted the influence of the environment, information processing theory essentially ignored it, and social-cognitive theory tried to strike a balance between the two by acknowledging its potential influence. Constructivist (also known as sociocultural) theorists take it a step further.

According to constructivist theories (which can either focus more on individual or on societal construction of knowledge; Phillips, 1995), knowledge and learning are inherently dependent on the cultural context (i.e., environment) to which one belongs. That may sound like repackaged behaviorism, but “the environment” to a constructivist goes far beyond stimuli, rewards, and punishments.

In constructivist theories of learning, “the environment” includes our family dynamics, friends, broad cultures and specific subcultures of groups with which we associate, and numerous other factors which all influence our learning. Although all constructivist theories may not agree on one single definition of learning, for our purposes a basic definition suffices: learning is development through internalization of the content and tools of thinking within a cultural context.

Constructivist theories posit that one’s culture provides the tools of thinking, which in turn influence how we learn—or “construct” knowledge. Perhaps the best-known constructivist theorist is Lev S. Vygotsky. Vygotsky authored many papers and two books, which were eventually published together posthumously as a single book titled Mind in Society.

In this collection of Vygotsky’s work, concepts such as internalization and the zone of proximal development (ZPD) are introduced (Vygotsky, 1978). Briefly, constructivist learning theories posit that something is learned when a person internalizes its meaning—internalization is an independent developmental achievement.

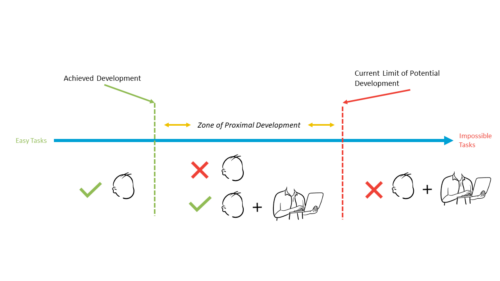

Further, the ZPD encompasses tasks that a learner cannot yet complete independently but which they can complete with help (see Figure below). Constructivist theorists target the ZPD for optimal learning, and if successfully done, learners construct their own meaning of the new information, thereby internalizing it.

Vygotsky asserted that thoughts are words. That is, thoughts are inextricably tied to the language we use. In his view, one can not “think” a thought without first having a word for the thought. For example, one cannot think about a sandwich if they have not first internalized the meaning of the word sandwich. In this way, Vygotsky (and by extension many constructivist theorists) view words as the “tools” for cognition and thus higher-order thinking.

Strengths

- Constructivist theories are more attentive to learners’ past experiences and cultural contexts than the other major learning theories discussed in this blog series. Because of that, they can provide solid theoretical footing to many research projects focused on addressing disparities.

- The zone of proximal development, internalization, and consideration of words as tools of thought are compelling concepts introduced by constructivist theories.

- The focus on culture and words as tools of thought in constructivist theories can help explain the variety of cognition patterns observed across cultures (e.g., different arithmetic strategies across cultures).

Limitations

- Although constructivist theories prove strong in many aspects, they are not as applicable to other forms of learning. For example, constructivists are almost exclusively concerned with higher-order learning, and they largely cannot account for learning exhibited by animals (like behaviorist theories can).

- Constructivist theories of learning lie on a scale, from more radical to more conservative, regarding the influence of a person’s individual history. While this is not necessarily a bad thing, it does muddy the waters when one refers simply to “constructivism,” which in fact encompasses a very wide swath of perspectives on learning.

Potential Use Cases in Computing Education

- Research: A researcher may attempt to identify first-year computer science students’ individual zones of proximal development related to coding, and see if teaching coding within this zone enhances students’ motivation, interest, and intention to pursue the content further compared to teaching content outside the zone.

- Practice: Teachers need to ensure all students have (accurately) internalized the meanings of key vocabulary terms/concepts related to their content (e.g., what “objects” or “classes” really are) before they can rely on students to properly use the concept in higher-order, complex problem solving.

Influential theorists:

- Lev Semyonovich Vygotsky (1896 – 1934)

- John Dewey (1859 – 1952)

- Jean Piaget (1896 – 1980)

Recommended seminal works:

Cobb, P. (1994). Where Is the Mind? Constructivist and Sociocultural Perspectives on Mathematical Development. Educational Researcher, 23(7), 13–20. https://doi.org/10.3102/0013189X023007013

Phillips, D. C. (1995). The Good, the Bad, and the Ugly: The Many Faces of Constructivism. Educational Researcher, 24(7), 5-12. https://doi.org/10.3102/0013189X024007005

Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvard University Press. https://books.google.com/books?id=Irq913lEZ1QC

References

Phillips, D. C. (1995). The Good, the Bad, and the Ugly: The Many Faces of Constructivism. Educational Researcher, 24(7), 5-12. https://doi.org/10.3102/0013189X024007005

Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvard University Press. https://books.google.com/books?id=Irq913lEZ1QC

Series

Social Cognitive Theory

Comments Off on Social Cognitive TheoryPresented by Joe Tise, PhD, Educational Psychology & Senior Education Researcher at CSEdResearch.org

In light of these two influential (albeit largely opposing) theories of learning, we see that both theories account for unique aspects of learning despite their limitations. Still, neither behaviorist nor information-processing theories account for one prominent form of learning, with which all people have experience—learning by observation. We may rightfully wonder then: is there a new theory that incorporates the strongest elements of each and can explain how humans learn through observing others? Enter: Social-cognitive theory (SCT; Bandura, 1986).

SCT is largely attributed to the prominent psychologist Albert Bandura, but many other theorists have since produced high quality research that has supported and refined the theory, especially within educational contexts (e.g., Barry Zimmerman, Paul Pintrich, Dale Schunk).

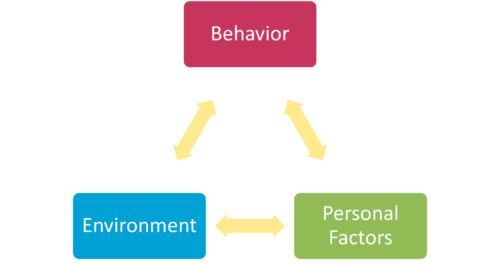

SCT posits human behavior is one part of a triadic, reciprocal relationship with the environment (think behaviorism) and personal factors (IPT is represented here). The figure below represents this relationship visually.

Whereas behaviorist theories of learning drew only a one-way connection between environment and behavior (i.e., environment determines behavior) and IPT essentially stayed within the cognitive system entirely, SCT asserts that the environment, behavior, and personal factors (e.g., ways of thinking, attitudes, emotions, metacognition) all influence and partially determine each other.

Support for this assertion comes from decades of research kicked off by the seminal bobo doll experiments at Stanford (Bandura et al., 1961, 1963). These groundbreaking experiments were some of the first to show empirically that humans do learn through observation.

This evidence directly contradicted a basic tenet of behaviorism—that organisms only learn after the environment acts upon them directly (e.g., through direct punishment or reward). These experiments further showed that one’s behavior (children in this case) is influenced not just by the environment (in these experiments, the presence of an aggressive-acting adult), but also by personal factors (in these experiments, the children’s gender). Thus, learning in SCT is inherently tied to the context and learners’ personal factors.

From these basic tenets, we get the SCT definition of learning: a change or potential for change in behavior or cognition, situated within specific contexts.

Strengths

- Social-cognitive theory is deceptively simple—it involves just three overarching components but each component represents countless influential factors.

- SCT incorporates many of the strengths of both behaviorism and IPT and extends both theories in unique ways. It is the only theory of learning that sufficiently explains observational learning. It is also highly relevant to both research and practice.

- SCT explains many complex human phenomena, such as self-efficacy, self-regulated learning, stereotype threats, and the influence of role models. Others have adapted SCT to other realms, for example business where it is referred to as social-cognitive career theory (SCCT; Lent et al., 1994, 2002).

Limitations

- SCT can be a bit more abstract than behaviorism or IPT and thus the implications for practice are sometimes less clear. This is especially true for researchers and practitioners who are unfamiliar with learning theories.

- Further, because of its deceptive complexity, a comprehensive test of SCT within a single study is more difficult compared to behaviorism and IPT. Controlling for the numerous environmental, personal, and behavioral factors that SCT might identify as relevant would be a heavy lift for even the most well-funded research study to accommodate.

Potential Use Cases in Computing Education

- Research: A study could investigate how characteristics of a role model (e.g., race/ethnicity, gender, age) moderate the influence that role model has on underrepresented children’s intentions to pursue a particular STEM career path.

- Practice: Teachers, mentors, and parents should highlight examples of when a student successfully overcame a challenge, and provide verbal encouragement and/or modeling when the student faces new challenges. These behaviors will support the student’s self-efficacy, and ultimately their persistence in the domain/on the task

Influential theorists:

- Albert Bandura (1925 – 2021)

- Barry J. Zimmerman (1942 – present)

- Paul R. Pintrich (1953 – 2003)

Recommended seminal works:

Bandura, A., Ross, D., & Ross, S. A. (1961). Transmission of aggression through imitation of aggressive models. Journal of Abnormal and Social Psychology, 63(3), 575–582.

Bandura, A. (1977). Self-efficacy: Toward a unifying theory of behavioral change. Psychological Review, 84(2), 191–215. https://doi.org/10.1111/1467-9280.00090

Bandura, A. (1986). Social foundations of thought & action: A social cognitive theory. Pearson Education.

Bandura, A. (1989). Human agency in social cognitive theory. American Psychologist, 44(9), 1175–1184. https://doi.org/10.1037/0003-066X.44.9.1175

References

Bandura, A., Ross, D., & Ross, S. A. (1961). Transmission of aggression through imitation of aggressive models. Journal of Abnormal and Social Psychology, 63(3), 575–582.

Bandura, A., Ross, D., & Ross, S. A. (1963). Imitation of film-mediated aggressive models. The Journal of Abnormal and Social Psychology, 66(1), 3–11. https://doi.org/10.1037/h0048687

Bandura, A. (1986). Social foundations of thought & action: A social cognitive theory. Pearson Education.

Lent, R. W., Brown, S. D., & Hackett, G. (1994). Toward a Unifying Social Cognitive Theory of Career and Academic Interest, Choice, and Performance. Journal of Vocational Behavior, 45(1), 79–122. https://doi.org/10.1006/jvbe.1994.1027

Lent, R. W., Brown, S. D., & Hackett, G. (2002). Social Cognitive Career Theory. In D. Brown (Ed.), Career Choice and Development (4th ed., pp. 255–311). Jossey-Bass.

Series

Information Processing Theory

Comments Off on Information Processing TheoryPresented by Joe Tise, PhD, Educational Psychology & Senior Education Researcher at CSEdResearch.org

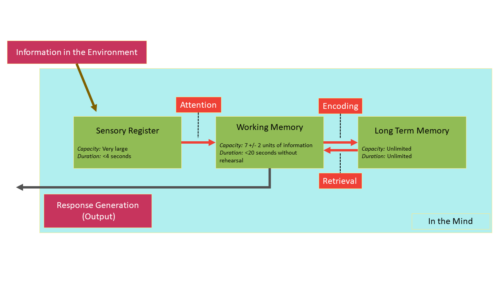

The stark limitations of behaviorist theories of learning gave rise (in part) to cognitive theories of learning, the most prominent of which is information processing theory (IPT) (Atkinson & Shiffrin, 1968). As you will see, IPT is analogous to a computer system in many ways. IPT posits three primary “stores” of memory and three primary cognitive “processes.” The three memory stores include the:

- Sensory register (like a motion detector or thermostat)

- Working memory (like RAM)

- Long-term memory (like a hard drive)

The three processes include:

- Attention (like selecting which folder or drive to work in)

- Encoding (like writing to a disk)

- Retrieval. (like reading a disk)

When a person encounters information (broadly construed), it exists first in the sensory register which is informed by the five physical senses as shown in the following figure.

Information in the sensory register persists only as long as the senses actively perceive the information (e.g., the shapes of words on a page). Once the senses stop perceiving the information, the sensory register is cleared.

Information in the sensory register persists only as long as the senses actively perceive the information (e.g., the shapes of words on a page). Once the senses stop perceiving the information, the sensory register is cleared.

So how does one learn anything, then? The first primary cognitive process must be invoked—attention. Information is transferred from the sensory register to working memory when we direct attention toward the information—and only at this point do we become conscious of it. This is analogous to how a computer transfers information from a physical sensor (sensory register) to its RAM (working memory) for manipulation.

You have likely already heard that working memory (WM) is limited to 7 +/- 2 pieces of information (Miller, 1956), and this fact illustrates one relatively strict limitation of our cognitive system. Working memory is in many ways a “bottleneck” to human learning and cognitive functioning. Information persists in WM for about 20-30 seconds without rehearsal or other cognitive manipulations of the information. As with a computer’s RAM, it is limited in capacity and will be periodically cleared.

If we want the information to persist longer than that, we must apply the second primary cognitive process, encoding, to the information so that it can move from WM to long term memory (LTM), much like writing to a hard drive. LTM capacity is theoretically unlimited and information within LTM can persist forever.

Finally, if we wish to use information in LTM, we must invoke the third primary cognitive process: retrieval. Retrieval brings information out of LTM and back into WM so that it is once again conscious to us and can be manipulated or articulated via speech, writing, actions, or other means. Drawing the information from LTM into WM is akin to reading information from a hard drive.

Only now can one understand the IPT definition of learning. IPT views human learning as the transfer (i.e., encoding) of information from working memory into long term memory.

Strengths

Information processing theory provides a succinct framework for understanding how the human brain processes information. While behaviorists completely disregard the cognitive domain, IPT attempts to directly explain it. Tenets of IPT are ripe for empirical investigation (e.g., the capacity and duration of working memory has been studied countless times).

IPT also is directly applicable to many fields beyond just learning, and its tenets are leveraged in domains such as user experience research, driving safety courses, and brain health assessments for sports injuries and dementia screening.

Limitations

While information processing theory provides explanations for many of the cognitive phenomena we encounter during learning and daily life, some limitations still exist. For example, IPT faces a supposed “homunculus” problem. That is, models of working memory (yes, there are sub theories of IPT to further specify the working memory component) detail a central executive component, which controls the two other components of working memory (see Baddeley, 2003 for more detail).

But this raises the question—what controls the central executive? Herein lies the problem. Such models of working memory appear to rely on a homunculus—a small imaginary “being” inside our brains that controls the central executive, which in turn controls the other components of working memory.

This blog post is far too broad to properly detail models of working memory, so for our purposes just know that critics of IPT cite the homunculus problem as at least a needed point of further theoretical refinement.

Potential Use Cases in Computing Education

- Research: How does a student’s working memory capacity relate to their coding skill/accuracy?

- Practice: Direct attention to salient features of content (to ensure attention is on the correct features of content), provide and model use of learning strategies (to promote encoding), and use low-stakes practice quizzes/questions often to exercise students’ retrieval process.

Influential theorists:

- Richard C. Atkinson (1942 – present)

- Richard M. Shiffrin (1929 – present)

- Alan Baddeley (1934 – present)

Recommended seminal works:

Atkinson, R. C., & Shiffrin, R. M. (1968). Human memory: A proposed system and its control processes. In K. W. Spence & J. T. Spence (Eds.), The psychology of learning and motivation: Advances in research and theory, Vol II (pp. 89–195). Academic Press.

Baddeley, A. D., & Hitch, G. (1974). Working memory. In G. H. Bower (Ed.), Psychology of Learning and Motivation (Vol. 8, pp. 47–89). Academic Press. https://doi.org/10.1016/S0079-7421(08)60452-1

Baddeley, A. (1992). Working Memory. Science, 255(5044), 556–559. https://doi.org/10.1126/science.1736359

Miller, G. A. (1956). The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychological Review, 63, 81-97. https://psychclassics.yorku.ca/Miller/

Shiffrin, R. M., & Atkinson, R. C. (1969). Storage and retrieval processes in long-term memory. Psychological Review, 76(2), 179–193.

References

Atkinson, R. C., & Shiffrin, R. M. (1968). Human memory: A proposed system and its control processes. In K. W. Spence & J. T. Spence (Eds.), The psychology of learning and motivation: Advances in research and theory, Vol II (pp. 89–195). Academic Press.

Baddeley, A. (2003). Working memory: Looking back and looking forward. Nature Reviews | Neuroscience, 4, 829–839. https://www.nature.com/articles/nrn1201

Miller, G. A. (1956). The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychological Review, 63, 81-97. https://psychclassics.yorku.ca/Miller/

Series

Behaviorism Introduction

Comments Off on Behaviorism IntroductionPresented by Joe Tise, PhD, Educational Psychology & Senior Education Researcher at CSEdResearch.org

At least surface-level familiarity with Pavlov’s experiments and principles of classical and operant conditioning have become almost ubiquitous among the general public. What many may not know, however, is that classical and operant conditioning are the two primary Behaviorist theories of learning. To a behaviorist, only observable behavior is worthy (or even possible) of scientific study. From this philosophy stems the behaviorist definition of learning: a relatively permanent change in behavior that is caused by experience. Behaviorist theories of learning exclude any attempts to examine cognition or cognitive processes because they are not directly observable.

The theory of classical conditioning was born from Pavlov’s experiments with dogs. Pavlov discovered that his dogs began to associate food with the sound of a bell, and that upon ringing the bell, his dogs would salivate. Thus, a change in behavior (salivation) was caused by experience (presentation of food shortly after the bell). Note that classical conditioning only applies to involuntary (reflexive) behavior (e.g., fear, physiological responses). Operant conditioning (think B.F. Skinner) extends the theory into voluntary behavior. In a series of classic experiments, psychologist B.F. Skinner trained rats to perform novel behaviors in exchange for food (reinforcement) or in anticipation of an electric shock (punishment) (Skinner, 2019).

Operant conditioning still relies on the mechanism of association, but accounts for novel (i.e., not innate) behavior. Indeed, operant conditioning is at the heart of nearly all animal training (e.g., dogs, show animals) and has real-world applications for classroom management. For example, a teacher may reward students with a small toy if they participate in class and may punish students (e.g., by issuing a demerit) for acting out. If this reinforcement and/or punishment is successful and the student’s behavior changes, the behaviorist would say the behavior change is evidence of learning. Although purely Behaviorist research studies are less common today than they were 60-70 years ago, elements of Behaviorism are still prevalent in some fields and sub-disciplines, including game-based learning (e.g., though badges, scoring) (Coskun, 2019; Hulsbosch et al., 2023; Leeder, 2022)

Strengths

There are several strengths of behaviorist theories of learning.

- First, research has shown that behaviorist conceptions of learning are generalizable to not just multiple cultures, but indeed a wide variety of animals. That is, learning by association and reinforcement/punishment is not uniquely human, and therefore behaviorist theories of learning are by far the most generalizable.

- And since behaviorists study only what can be observed directly (i.e., behavior), behaviorist theories are arguably the best-positioned to achieve replicability—a known problem in the psychology fields (Open Science Collaboration, 2015).

- Behaviorist principles are directly applicable to the classroom via classroom management techniques. Any experienced K-12 teacher will tell you that classroom management is a top priority, and there is ample opportunity to apply behaviorism throughout the instructional process.

- Behaviorism arose as a direct counter to eugenic philosophies, and therefore was one of the first DEI-minded approaches to psychological/educational research. To this effect, John Watson (1930) famously said: “Give me a dozen healthy infants, well-formed, and my own specified world to bring them up in and I’ll guarantee to take any one at random and train him to become any type of specialist I might select – doctor, lawyer, artist, merchant-chief and, yes, even beggar-man and thief…”

Limitations

Noteworthy limitations to behaviorism also exist. For example:

- Behaviorist theories cannot account for cognitive processing—and explicitly exclude study of cognition. Cognitive/educational research, and even simple experience, tells us that human learning is much more complex than involuntary associations and reinforcement/punishment schedules.

- The notion of observational learning (i.e., learning by watching someone else) is a prime example of the shortcomings of behaviorism. Behaviorism cannot explain observational learning.

- Finally, experienced students and teachers understand many tasks require complex problem solving, learning strategies, and metacognition to complete. Behaviorism falls short of even conceptualizing these constructs, let alone explaining them.

Potential Use Cases in Computing Education

- Research: An intervention based in classical conditioning designed to reduce negative physiological responses (anxiety) to computers/computer science. These negative physiological responses would also influence students’ self-efficacy, so a link could be made to social-cognitive theory as well.

- Practice: A teacher could begin each class with a pleasant story, song, comment, snack, or even scent to elicit a positive emotional response from their students. After repeated exposure (i.e. conditioning), the students should associate positive feelings with the classroom/subject/teacher.

Influential theorists

- John B. Watson (1878 – 1958)

- B.F. Skinner (1904 – 1990)

- Edward L. Thorndike (1874 – 1949)

Recommended seminal works

- Watson, J. B. (1913). Psychology as the behaviorist views it. Psychological Review, 20(2), 158–177. https://doi.org/10.1037/h0074428

- Skinner, B. F. (1965). Science and human behavior. Simon and Schuster.

- Thorndike, E. L. (1898). Animal intelligence: An experimental study of the associative processes in animals. The Psychological Review: Monograph Supplements, 2(4), i–109. https://doi.org/10.1037/h0092987

References

Coşkun, K. (2019). Conditioning Tendency Among Preschool and Primary School Children: Cross-Sectional Research. Interchange, 50(4), 517–536. https://doi.org/10.1007/s10780-019-09373-1

Hulsbosch, A., Beckers, T., De Meyer, H., Danckaerts, M., Van Liefferinge, D., Tripp, G., & Van Der Oord, S. (2023). Instrumental learning and behavioral persistence in children with attention‐deficit/hyperactivity‐disorder: Does reinforcement frequency matter? Journal of Child Psychology and Psychiatry, 64(11), 1–10. https://doi.org/10.1111/jcpp.13805

Leeder, T. M. (2022). Behaviorism, Skinner, and Operant Conditioning: Considerations for Sport Coaching Practice. Strategies, 35(3), 27–32. https://doi.org/10.1080/08924562.2022.2052776

Open Science Collaboration. (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716. https://doi.org/10.1126/science.aac4716

Skinner, B. F. (2019). The Behavior of Organisms: An Experimental Analysis. B. F. Skinner Foundation. https://books.google.com/books?id=S9WNCwAAQBAJ

Watson, J. B. (1930). Behaviorism (Revised edition). University of Chicago Press.

Series

Introduction to Learning Theories Series

Comments Off on Introduction to Learning Theories SeriesPresented by Joe Tise, PhD, Educational Psychology & Senior Education Researcher at CSEdResearch.org

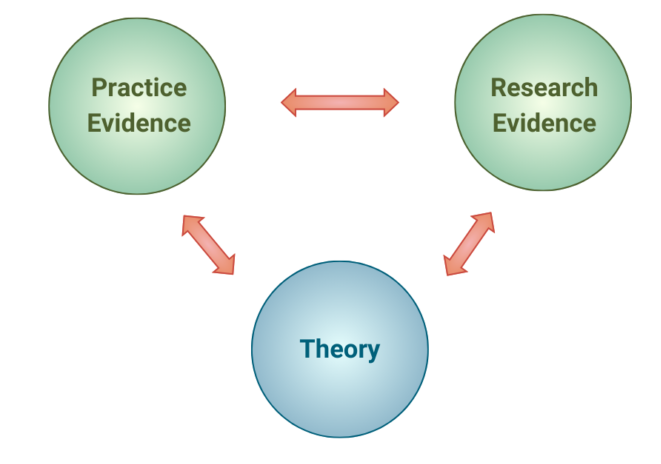

If data is a pile of bricks, theory is a building plan. Used together, a house can be built and a valid representation of truth can be uncovered.

The traditional view of education research would say data without theory is no more useful than a pile of bricks without a building plan. This understanding is at the heart of traditional quantitative educational and psychological research. Quantitative educational researchers view theory as integral to the relationship between research and practice because it gives rise to causal hypotheses and, in turn, informs action.

However, one may (convincingly) argue that recent developments in artificial intelligence (AI), data mining, machine learning (ML), and large-language modeling (LLM) uncover deep insights and relationships despite not being driven by a particular a priori theoretical perspective. While this is certainly true, I argue that researchers still must construct theoretical models (broadly construed) to make sense of the patterns and insights uncovered by these empirical methods. There is some inherent utility in using AI or ML to discover, for example, that students’ user log data in a learning management system can predict their eventual GPA or likelihood of dropping the course. But understanding why these relationships exist requires theoretical musing, which so far cannot be accomplished via AI or ML.

Further, as a qualitative researcher may be quick to point out–some research questions are simply too cutting-edge to be grounded in theory a priori. Save for truly exploratory research (where very little or even no prior research exists), educational researchers will tend to engage a theory either as a guide prior to data collection or explanatory mechanism after data analysis–whether that theory is robust with decades of empirical support or more fledgling and known only to the researcher.

As one manifestation of educational research, computer science education (CSEd) research needs to be grounded in established educational theory and/or generate new theory where established theory falls short. Fortunately, nearly 100 years of educational research have already passed. The fruits of this research are four prominent theories about how learning occurs: Behaviorism, Information-processing, Social-cognitive, and Constructivism.

In this four-part series, I introduce and briefly overview each theory and in doing so, I forward a paraphrased definition of the (nebulous) term “learning” associated with each theory, outline central assumptions, explicate strengths and limitations, and recommend several seminal works for each theory of learning.

I encourage education researchers who wish to research the learning phenomenon to pay attention to each. If you have limited time, however, I suggest paying special attention to the posts and subsequent recommended readings on information-processing and social-cognitive theories, as these two theories undergird much of contemporary educational research (whether these theories are explicitly mentioned in publications or not) and have shown prowess in explaining the complicated web of influences on human learning.

Series:

Reimagining CS Pathways: High School and Beyond

Comments Off on Reimagining CS Pathways: High School and BeyondIn the past four years, the proportion of US high schools offering at least one computer science (CS) course increased from one-third to one-half (source), and more growth is expected. Simultaneously, the field of computer science has shifted significantly and we have continued to learn more about what it means to teach computer science with equitable outcomes in mind. One challenge in CS education is ensuring that curriculum and pedagogy adapt to these shifting grounds; it is easy to imagine the frustration of a student who discovers that their high school CS instruction has left them poorly prepared for future opportunities to learn computer sciences.

We are pleased to announce, in collaboration with the Computer Science Teachers Association (CSTA), our new NSF-funded project to address this issue. With Bryan Twarek (PI) and Dr. Monica McGill (Co-PI) at the helm, the Reimagining CS Pathways: High School and Beyond project has the long-term goal of articulating a shared vision for introductory high school CS instruction that could be used to fill a high school graduation requirement as well as the alignment between that content and the two AP CS courses and college-level CS courses.

Our work will not be in isolation. Reimagining CS Pathways: High School and Beyond includes three convenings of K12 teachers and administrators, instructors at 2- and 4- year colleges, curriculum developers, industry representatives, state CS supervisors, and other vested parties. Written reports of these convenings will be shared with the public. Additionally, the project will create:

- Recommendations for the content of an introductory high school CS course

- Descriptions of high school CS courses beyond an introductory course, including suggested course outcomes

- Recommendations for possible adjustments to the CSTA standards and the AP program

- A framework for the process of creating similar course pathways in the future

Undergirding this work is a commitment to more equitable CS instruction, ensuring that all students – including those who have historically been less likely to study CS – will have access to these CS pathways. A more coordinated approach to high school and college level CS instruction is also more likely to meet the needs of industry and society as a whole.

This project expects to have its recommendations and framework available in the summer of 2024.

Click here to learn more about this project.

If you are interested in participating, please reach out via our contact form or, for more information, contact [email protected].

“But They Just Aren’t Interested in Computer Science” (Part Three)

Comments Off on “But They Just Aren’t Interested in Computer Science” (Part Three)Written by: Julie Smith

Note: this post is part of a series about the most-cited research studies related to K12 computer science education.

It’s discouraging to learn that children as young as age six express the belief that boys are better than girls at programming and at robotics, and girls have less interest in or belief in their ability to succeed in computing.

But the good news from the study Programming experience promotes higher STEM motivation among first-grade girls is that it was, in their experiment, actually not that difficult to improve girls’ interest and belief in their self-efficacy: all it took was twenty minutes in the lab with a cute robot that they could program with a smartphone. After that intervention, their interest and self-efficacy were statistically indistinguishable from boys; the same was not the case for girls who engaged in another activity unrelated to technology.

There’s a reason this article is one of the most commonly-cited in the computer science education literature: the representation rates of women in computing – from high school courses through college majors and into the workforce – remains stubbornly low. This article suggests that, while stereotypes are adopted early, a relatively simple intervention for young children could perhaps be enough to overcome the effects of those stereotypes on girls’ interest in computing.

Further Reading

- The role of recognition in disciplinary identity for girls

- Subtle Linguistic Cues Increase Girls’ Engagement in Science

Series

“But They Just Aren’t Interested in Computer Science” Part One

“But They Just Aren’t Interested in Computer Science” Part Two

“But They Just Aren’t Interested in Computer Science” (Part Two)

Comments Off on “But They Just Aren’t Interested in Computer Science” (Part Two)Written by: Julie Smith

Note: this post is part of a series about the most-cited research studies related to K12 computer science education.

The study’s title says it all: “Gender stereotypes about interests start early and cause gender disparities in computer science and engineering.” It’s worth noting that the careful design of their studies bolsters the case: this work includes both surveys and experiments, allowing the researchers to comment on causality. The combination of surveys and interventions makes it possible to conclude that the stereotype drives the lower interest rate, not a student’s inherent lower rate of interest causing them to generate a stereotype by imputing their attitude onto others. Additionally, their diverse subject pool makes it more likely that their findings are widely applicable.

The researchers found that stereotypes suggesting that boys are more interested in computer science exist from at least the third grade. Further, these stereotypes make it less likely for girls to study computer science, an effect mediated by the girls’ decreased sense that CS is for them.

Significantly, stereotypes about interest in computer science were a stronger predictor of a student’s intent to study computer science than stereotypes about ability. The authors do point out that there is a stronger cultural norm against expressing ability stereotypes than interest stereotypes, which may make it harder to root out the interest stereotypes. At the same time, the finding that student interest in studying computer science could be impacted by their experiences in an experiment imply that interventions designed to counteract stereotypes may very well be effective.

The fact that this study is one of the most-cited K12 computer science education research studies suggests that its message of the importance of recognizing the role of interest stereotypes has resonated with many other researchers. The next step is to determine which types of interventions are most effective at breaking down interest stereotypes.

Further Reading

- Gender Stereotypes Influence Children’s STEM Motivation

- Beyond students: how teacher psychology shapes educational inequality

Series

“But They Just Aren’t Interested in Computer Science” (Part One)

“But They Just Aren’t Interested in Computer Science” (Part Three)

Emerging Promising Practices for CS Integration

Comments Off on Emerging Promising Practices for CS IntegrationOur recently accepted paper, Emerging Practices for Integrating Computer Science into Existing K-5 Subjects in the United States, will be presented at WIPSCE 2023 in Cambridge, England.

This particular qualitative work, conducted by Monica McGill, Laycee Thigpen, and Alaina Mabie of CSEdResearch.org, included interviews with researchers and curriculum designers (n=9) who have engaged deeply in K-5 CS integration for several years. Their perspectives were analyzed and synthesized to inform our results.

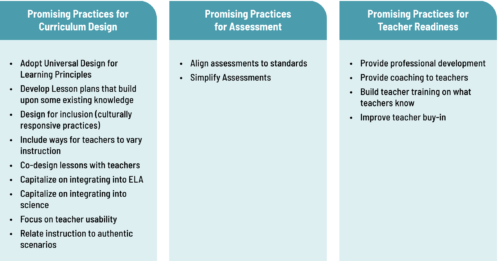

Several promising practices emerged for designing curriculum, creating assessments, and preparing teachers to teach in a co-curricular manner. These include ways for teachers to vary instruction, integrating into core (and oft tested) language arts and mathematics, and simplifying assessments. Many of the findings are borne from the need to help new teachers become comfortable teaching a new subject integrated into their other subjects.

Generally, promising practices that emerged included adopting Universal Design for Learning practices, include ways for teachers to take the curriculum and vary instruction to fit their comfort levels as they learn to teach CS integration, and co-design lessons with teachers. They also suggest capitalizing on integrating into language arts since it is a highly-tested and critical subject for learning.

Figure 1. General findings.

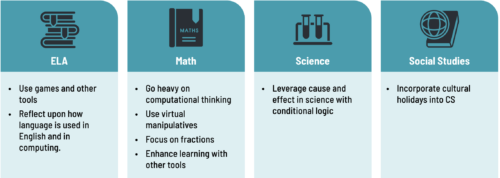

For more specific findings, the experts suggested integrating focusing on fractions for math, leveraging cause and effect in science to teach conditional logic, and reflecting upon how language is similarly used in English and in computing.

Figure 2. Subject specific findings.

You can read the full paper (including our methodology and profiles of our experts) here.

Monica M. McGill, Laycee Thigpen, and Alaina Mabie. 2023. Emerging Practices for Integrating Computer Science into Existing K-5 Subjects in the United States. In The 18th WiPSCE Conference on Primary and Secondary Computing Education Research (WiPSCE ’23), September 27–29, 2023, Cambridge, United Kingdom. ACM, New York, NY, USA, 10 pages. https://doi.org/10.1145/3605468.3609759 (effective after September 27th, 2023).

This material is based upon work supported by Code.org. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of Code.org.

We acknowledge and thank Brenda Huerta for her assistance with the literature review.